Background

I have worked at M*Modal since July of 2017, where I advocate and manage research for six products and services. In February 2019 M*Modal was acquired by 3M, as a part of the healthcare business group. In March of 2020 I was promoted to Senior Design Researcher.

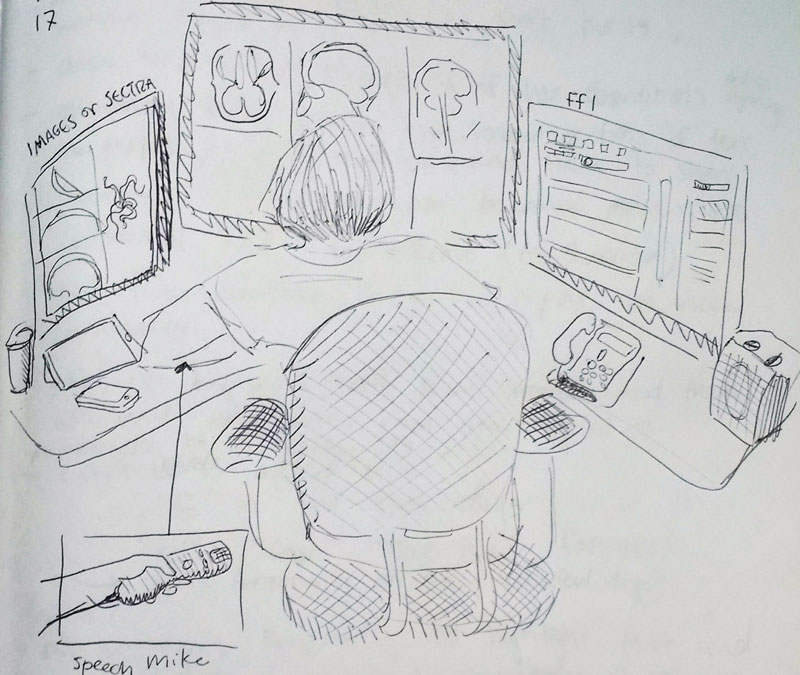

3M | M*Modal’s flagship product is speech recognition software for physicians, allowing them to dictate their medical notes rather than type. Building on top of this are additional physician-facing tools which use natural language processing to “read” over documents and make suggestions and recommendations. Additionally, we make products for medical coders, billers and remote medical scribes.

Research

Identifying and planning

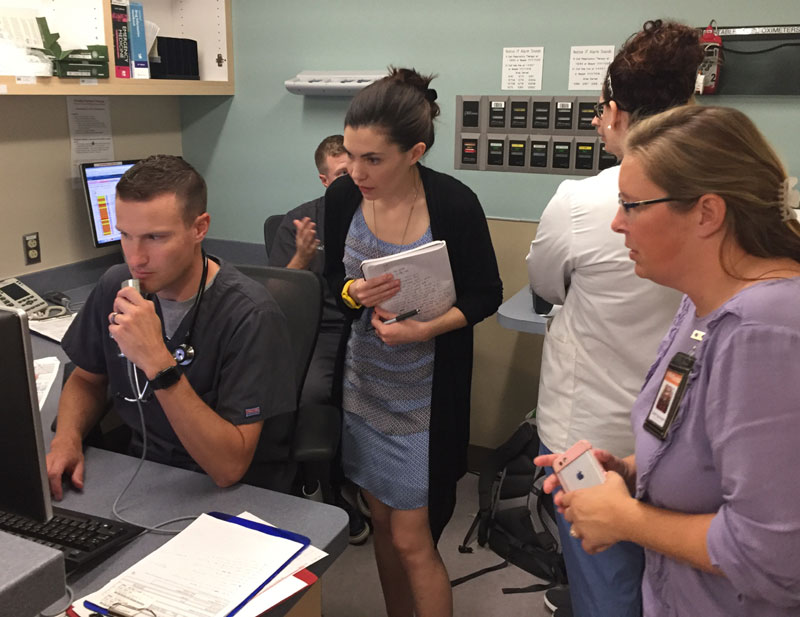

I work closely with each design lead and product owner. I listen to explicit requests as well as quiet opportunities for research, and, because I am the first and only research-focused designer within M*Modal, I prioritize projects where research could have the largest impact. After identifying a research project, I work closely with others to determine key questions and hypotheses, then select appropriate and feasible methods. I’ve used contextual inquiry, speed dating, usability testing, interviews, card sort and wizard of oz, among others. I have also run workshops and remote observation sessions with internal teams to bring them into the design process.

Recruiting

I am responsible for recruiting research participants, which is a challenge for several reasons. This is enterprise software, so end users are not the ones paying for the product. Additionally, if the end users are physicians, they are highly paid, stressed and time-crunched, and hidden behind layers of internal and external stakeholders. In addition to building relationships with these stakeholders, it was very important for me to cultivate a group of “physician friends” who I could contact directly for research needs. Since starting in this position, I have grown a network from 0 to over 90 research-eager physicians and administrators, many of whom have participated in multiple studies. I also worked to get a “research clause” added to new sales contracts.

Analyzing and delivering

Once I have completed a study, I process all results and perform synthesis exercises such as affinity diagramming and mind mapping. I share “research snacks” (short insights, quotes and tidbits) in relevant Slack channels as well as give in-progress updates during scrums. Once my analysis is finished, I write up detailed results pages on an internal wiki with a high-level “TL;DR” (too long, didn’t read) takeaways and recommendations section. Finally, I share the results of the research via slide deck presentations in small meetings, dedicated meetings and all-hands company meetings. I aim to make my research results accessible, memorable and actionable.

Additionally, a large part of my job has been to educate and promote a culture of design research within the company. This not only advances my aims of product decisions backed by research insights, but also helps build relationships. I have led hands-on design methods trainings, coached product teams to remove bias from interview questions, and even recorded an educational podcast for the sales team.

Conversational design

Our existing technologies of speech recognition, natural language processing and understanding have enabled 3M | M*Modal to enter the world of multi-modal voice user interface (VUI). Physicians face enormous administrative and clerical burdens on top of caring for patients and experience high rates of burnout. To alleviate this, we are driven to help physicians beyond just dictation by completing routine tasks and writing some (or all) of the medical note for them. We refer to this as ‘ambient clinical intelligence’. I was hired to research physician desires and preferences for such a tool, as well as investigate how we might slowly add in new technology while maintaining trust and physician satisfaction.

My initial research answered questions like, “what do physicians want an assistant to do?“, “how should a physician assistant behave/ speak?“, “how much proactive help is useful and not creepy or patronizing?“, and “should an Assistant speak in the room in front of a patient?” among others. To gather answers I completed a rigorous multi-faceted exploratory project at the outset, before moving onto generative and evaluative phases. At every step of the way, myself and design manager Anna Abovyan have been involved at the cutting edge of development and roadmap decision-making.

In addition to research, I have assisted with the design and development process. When Anna left 3M in September 2020, I took over all interaction and conversation design work. I have created storyboard vision documents, design princples, dialogue flows, high fidelity UI specs and wrote on-screen messaging, including error recovery and escalating error messages. I also performed QA testing on early prototypes before we hired dedicated QA. Additionally, at the request of the speech and Natural Language Understanding (NLU) teams, I spearheaded the voice data collection effort with physicians. Then, I analyzed these utterances and made recommendations to NLU about grammars: where intents and slots might be located within a sentence. This work also generated lists of words for our speech team to build into the recognizers.

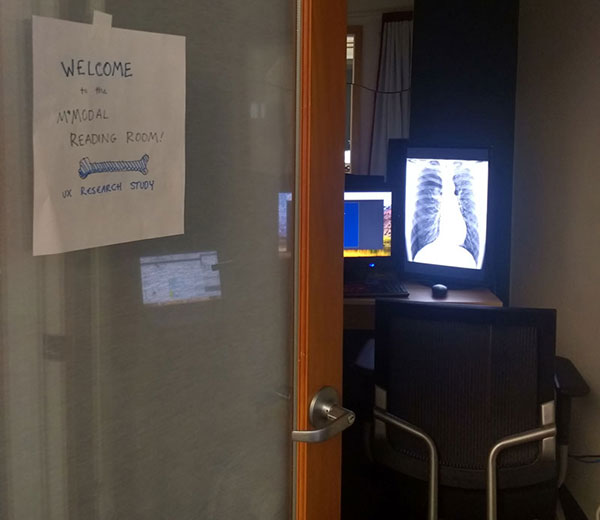

Project management of alpha pilot

Desperate for more physician feedback, in the summer of 2019 I recruited two local family physicians who were willing to participate in an alpha pilot. I project managed this alpha for 6 months, which entailed hardware and software deployment and support, watching metrics and usage logs, sharing new releases, writing tickets and providing critical feedback to development teams.

Launch

Today, 3M | M*Modal’s ambient assistant is released into the wild in several forms. It is used by physicians at major hospital networks across the United States, and we are continuing to add functionality and improve existing capabilities. You can read more about the product and it’s impact here, and the 3M’s product page here.